When Insight Finds Immediacy

WebOccult + Deeper-i at Japan IT Week 2025

Collaboration is often described as two entities working together, but at Japan IT Week 2025, WebOccult and Deeper-i proved it is something much more profound: it is the convergence of seeing and doing.

This month, we are proud to spotlight our successful co-exhibition at Makuhari Messe, where we unveiled a partnership defined not just by technology, but by a shared philosophy, that intelligence should live closer to the world it serves.

The Demo

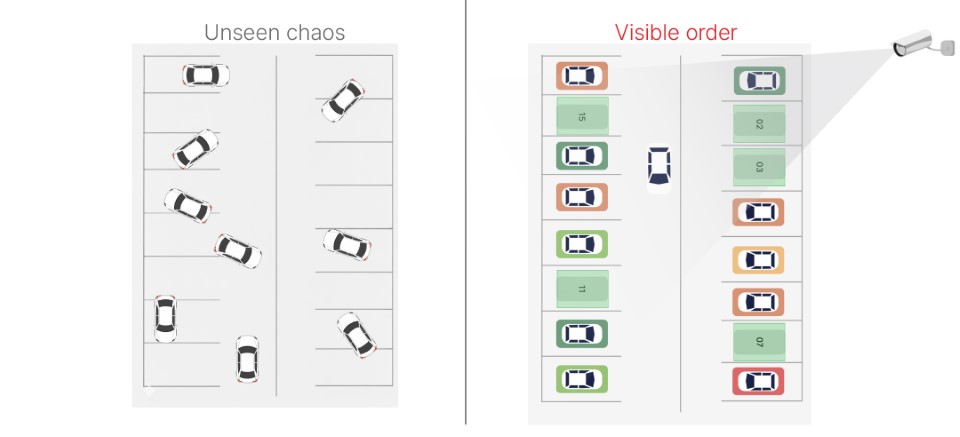

At the center of our booth sat a seemingly simple display: a tabletop model of a parking lot with toy cars. Yet, beneath this modest setup ran a powerful, self-contained ecosystem of intelligence.

Using a camera and a compact processing unit, the system tracked parking occupancy in real-time. As cars moved, the screen instantly flickered from green (available) to red (occupied).

There was no buffering, no cloud latency, and no network dependency.

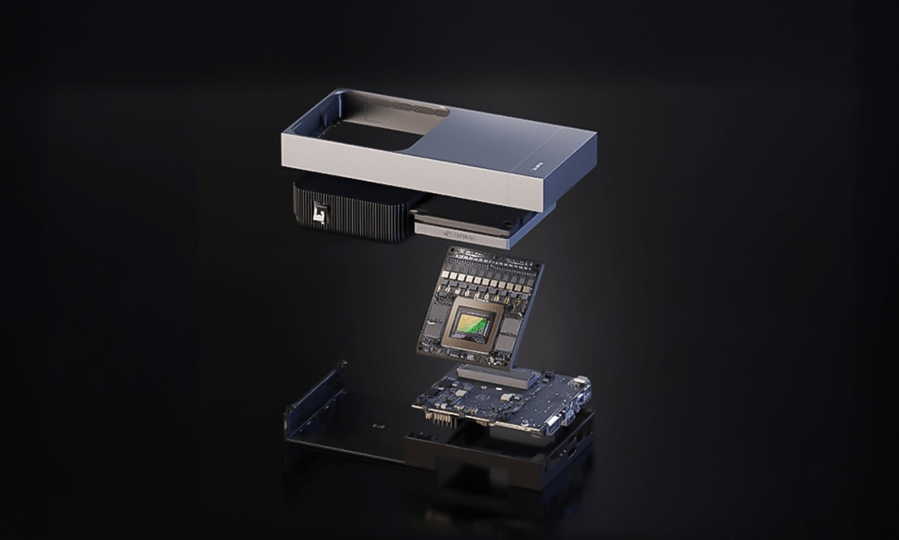

This was the Vision AI + Edge AI loop in action:

- WebOccult’s Gotilo Suite provided the eyes, detecting and classifying patterns.

- Deeper-i’s Tachy Architecture provided the brain, executing deep learning models locally with incredible speed.

Looking Ahead

Japan IT Week was just the rehearsal. The architecture we showcased, reliable, private, and instantaneous, is now ready to scale. Whether inspecting surfaces on a production line or optimizing logistics yards, WebOccult and Deeper-i are building a future where intelligence doesn’t wait. It acts.

Simply being normal is the new normal

Do you ever feel like you’re running a marathon at a sprint pace? We are conditioned to believe that momentum is everything. We fly across oceans, we chase the next big contract, and we convince ourselves that stopping is failure.

I was recently in that exact mode. I was halfway across the world, in the middle of a major exhibition in US, surrounded by opportunity. The energy was high. The schedule was packed. I felt unstoppable. And then, in a single second, everything stopped.

Life has a funny way of reminding you who is really in charge. A sudden breakage. An unexpected physical limitation. Just like that, the meetings didn’t matter. The strategy didn’t matter. The only thing that mattered was getting home. I had to leave everything behind and make an urgent U-turn. When you are forced to hit the brakes that hard, it feels like a sacrifice. You sit there thinking about the what ifs. You worry about the momentum you’re losing. You feel like you are letting people down.

But as I sat at home over the last few weeks, forced to slow down, my perspective shifted. We spend so much time trying to optimize our lives for growth. We want 10x revenue. We want faster deployments. We want maximum efficiency. But we rarely optimize for maintenance. We’ve heard the term ‘The New Normal’ a million times since 2020. Usually, it refers to remote work or AI adoption.

But for me? I have a different definition now. To simply be normal is the ultimate luxury. Getting back to my routine didn’t feel like a chore; it felt like a gift. Returning to Square One wasn’t a regression. It was a relief. When you experience a breakage, you realize that the baseline, the simple act of being functional, is actually the foundation of everything else. You can’t build a skyscraper if the ground beneath you is shaking.

You don’t need to wait for a breakage to appreciate the routine. Don’t resent the mundane parts of your day. Don’t be so obsessed with the next milestone that you ignore the health and stability that allows you to chase it in the first place.

I’m back at my desk now. I’m back to the grind. But I’m doing it with a little more gratitude for the boring, normal, beautiful routine.

Sometimes, the biggest win is simply having the strength to stand still.

The Intelligent Lens

Why AI is Finally Making Sense of the World

If you look back at how we used technology just a year ago, it feels like a different era because the speed of innovation in 2025 has been absolutely relentless. We used to think of cameras as just digital eyes that passively recorded whatever happened in front of them, but as we close out this year, that definition has completely shifted. The biggest breakthrough this season isn’t just about spotting a car or a person, but understanding the story behind what they are doing.

We are finally moving away from systems that just draw simple boxes around objects and entering a phase where we can actually talk to our cameras. Imagine being able to ask a security feed a plain English question like ‘Is the forklift blocking the emergency exit?’ and getting an immediate, intelligent answer without a human ever needing to look at a monitor. This ability to reason and understand context means that our software is becoming less like a tool and more like an active team member that is always watching out for safety and efficiency.

Another incredible shift we are seeing right now is the ability for standard, inexpensive webcams to understand depth and distance just as well as the human eye. We no longer need expensive laser sensors or complex hardware to measure the size of a package or the distance between vehicles because the new software can figure it all out from a simple flat image. It feels like we are finally moving from a world where computers just watch to a world where they truly understand, and for us at WebOccult, that opens up a universe of possibilities for 2026.

Offbeat Essence – The Luxury of Absence

True intelligence is no longer about how much a machine says, but how intuitively it understands without saying a word.

For a decade, we built technology that begged for attention. We measured innovation by the noise it made, buzzing pockets, flashing screens, and constant alerts.

But as we close 2025, the wind is shifting. The next era isn’t about connection; it’s about anticipation.

We are entering the age of Invisible Intelligence.

True sophistication is no longer about a machine that chats with you, but one that understands you without saying a word. It is the difference between a tool that demands supervision and a partner that quietly clears the path.

The future won’t be defined by the technology you stare at, but by the technology you don’t even notice is there.

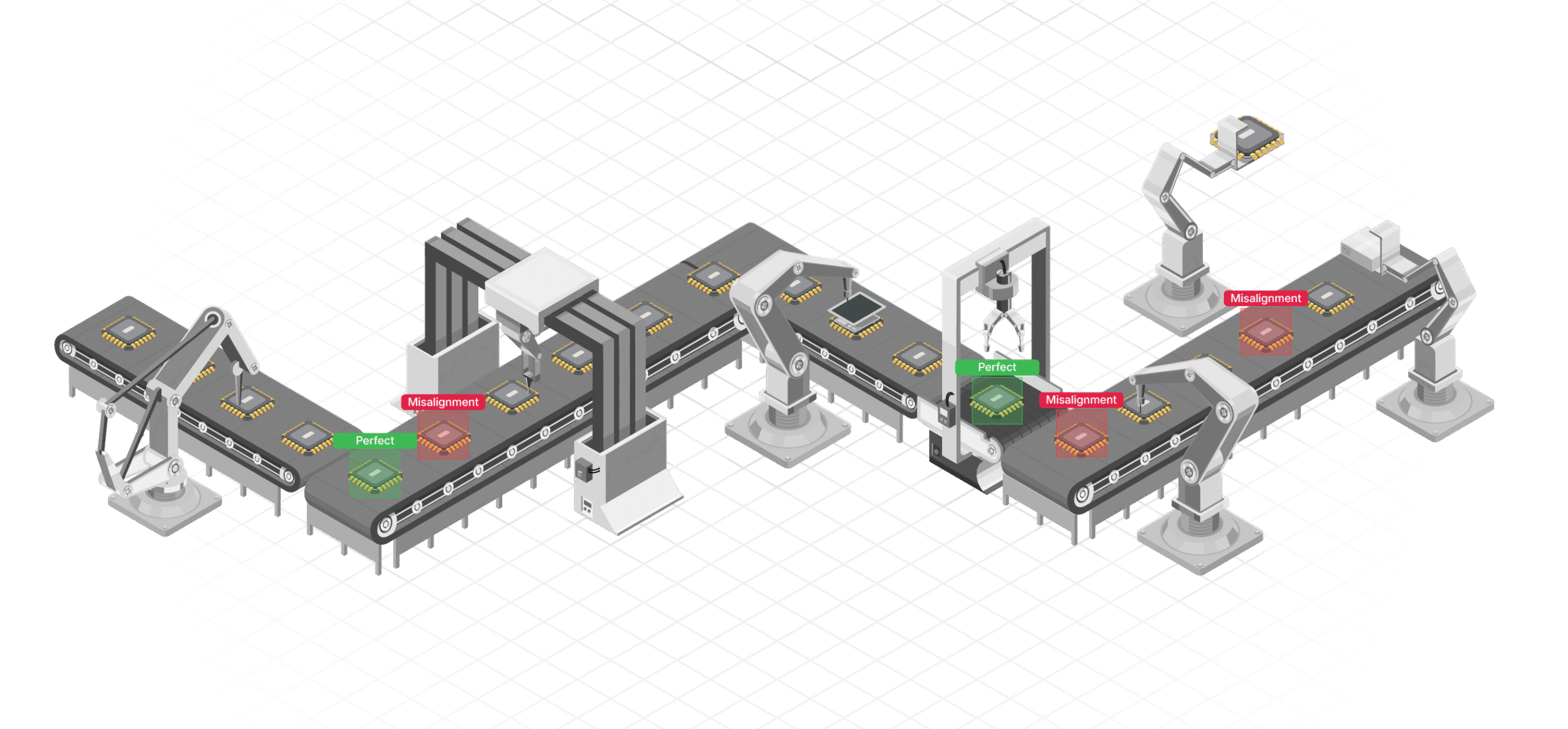

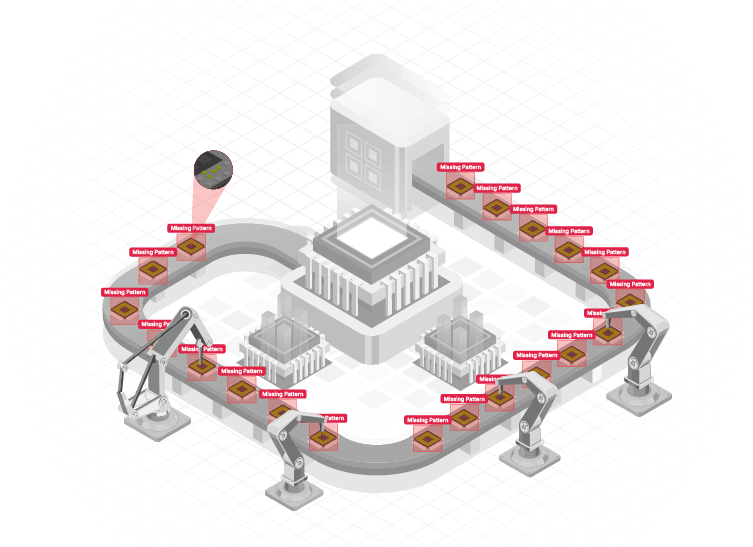

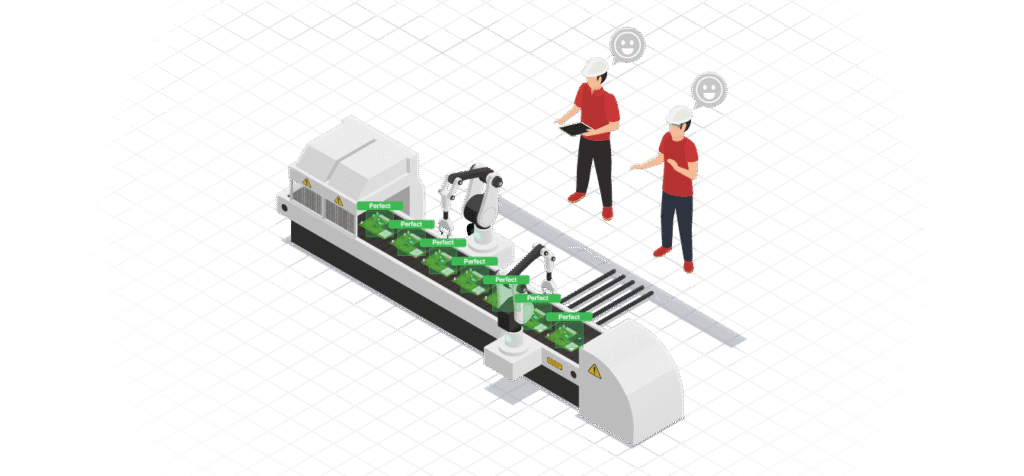

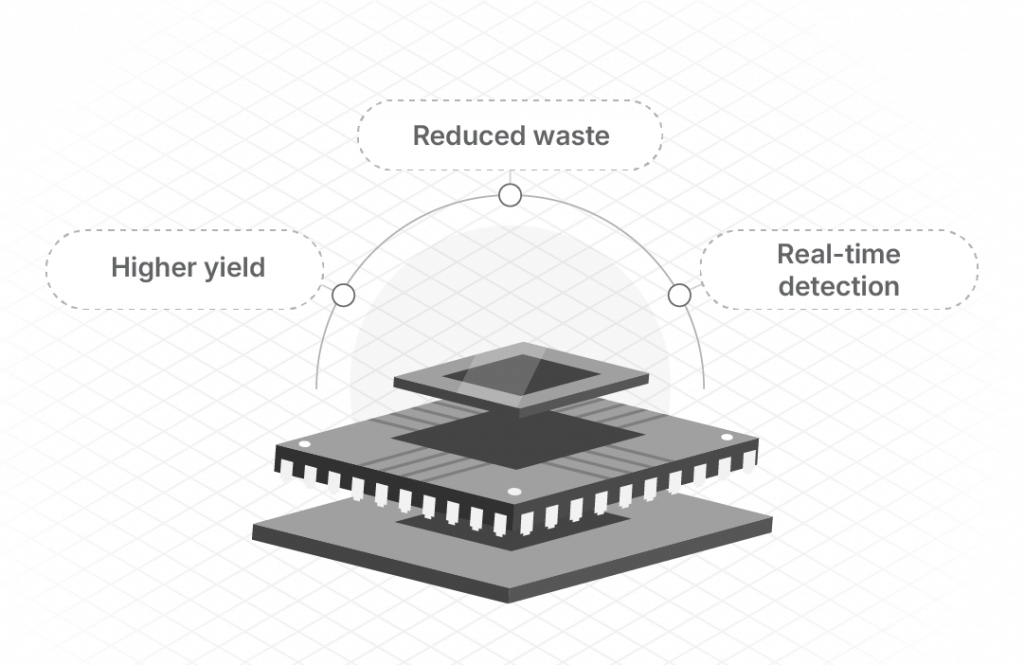

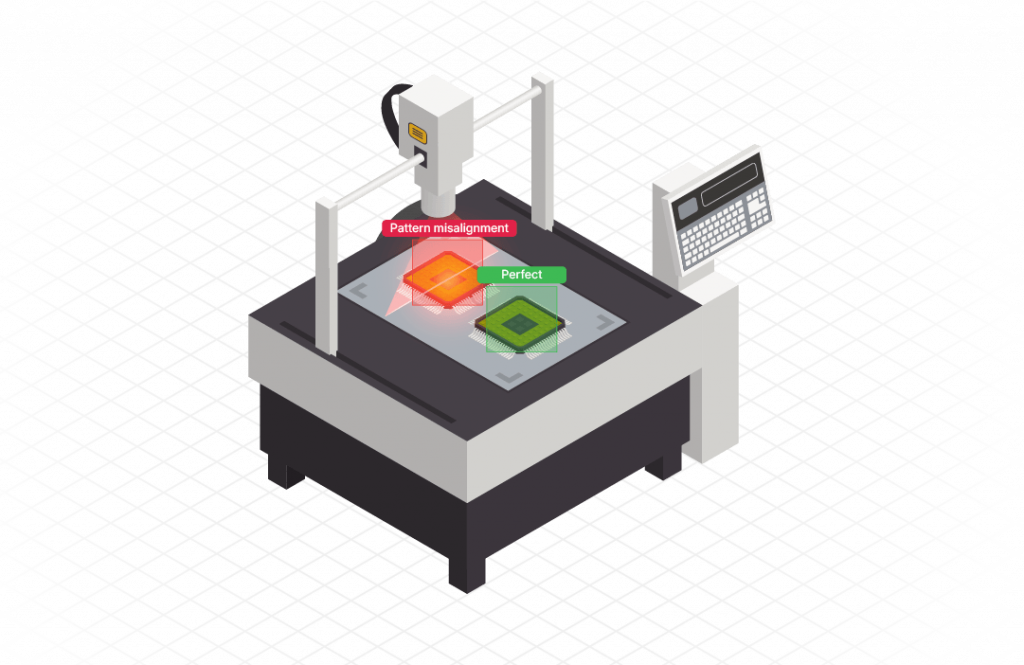

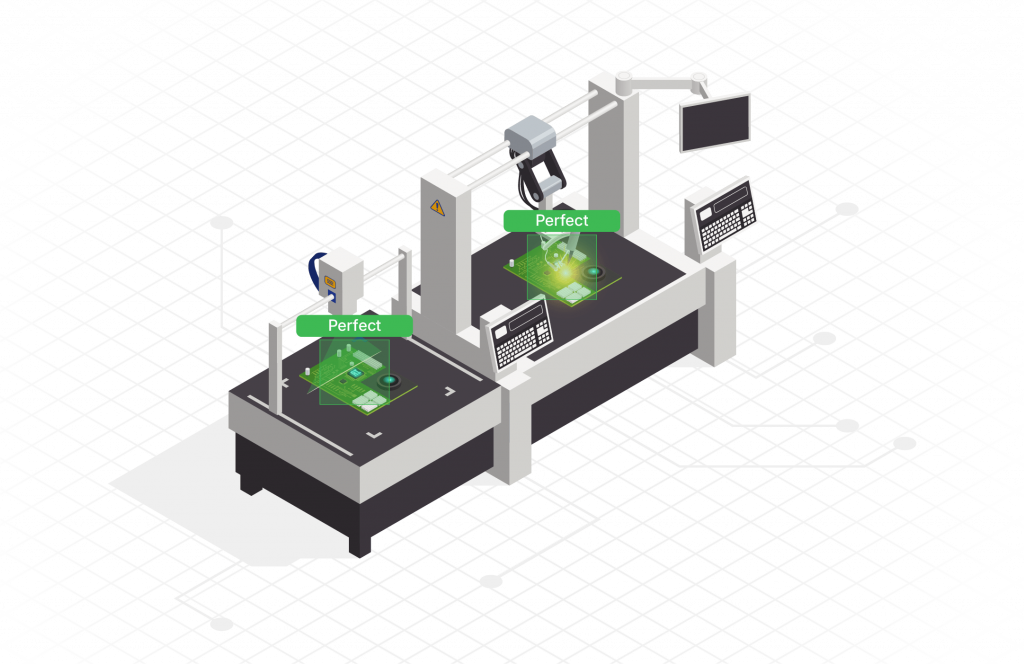

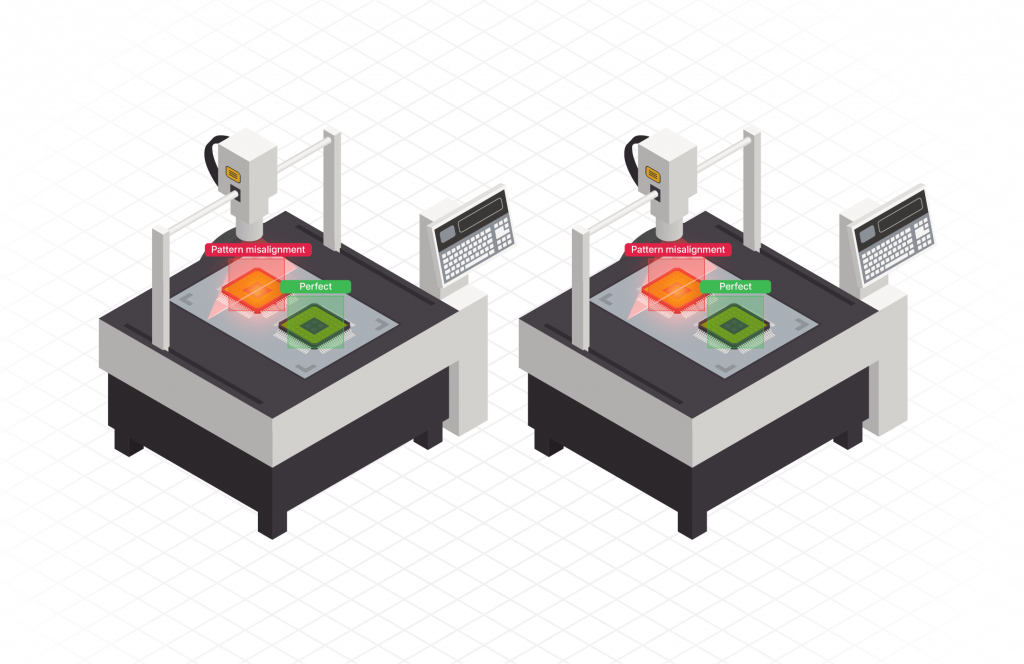

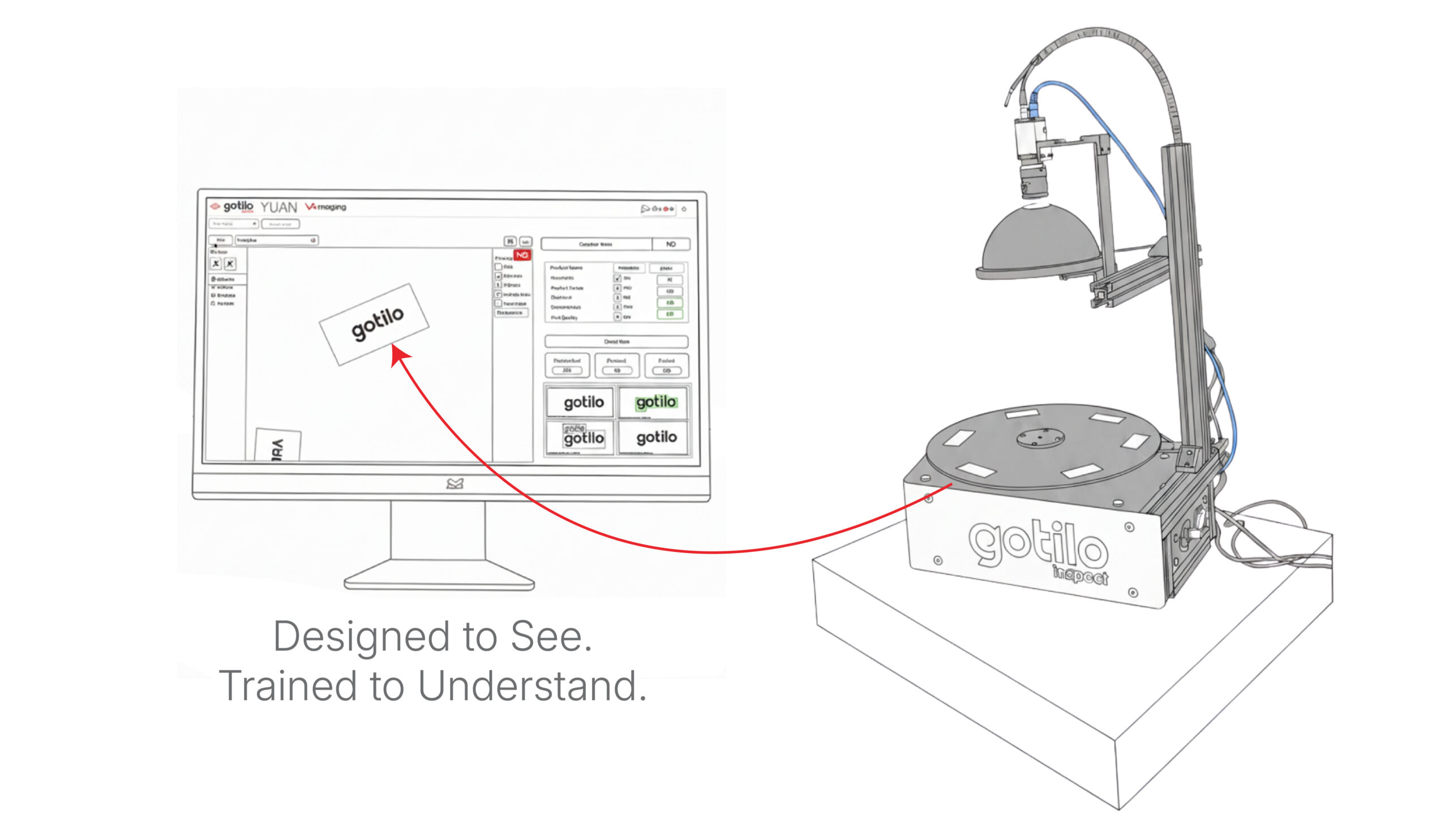

Inside the Gotilo Inspect

In the US market, the conversation around manufacturing and logistics has shifted. We are no longer just talking about automation, we are talking about operational resilience. With labor costs rising and quality standards becoming stricter than ever, American businesses can’t afford downtime, and they certainly can’t afford defects.

This is where Gotilo Inspect enters the equation.

I’ve spent the last few months speaking with facility managers across the States, and the pain point is universal: How do we maintain 100% quality without slowing down the line?

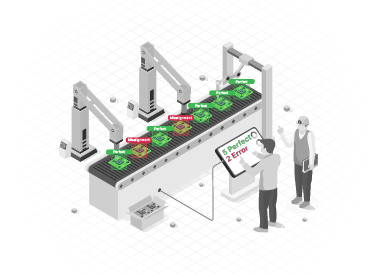

Gotilo Inspect is our answer. It is an AI-powered Visual Inspection system designed not to replace human oversight, but to give it superpowers.

Here is why it’s gaining traction in the US right now:

1. The End of the Random Sample

Traditional QC relies on checking every 10th or 100th unit. Gotilo Inspect offers 100% visibility. Whether it’s detecting surface scratches on automotive parts or verifying label placement on consumer goods, our algorithms check every single unit in real-time. We catch the defects that human fatigue misses.

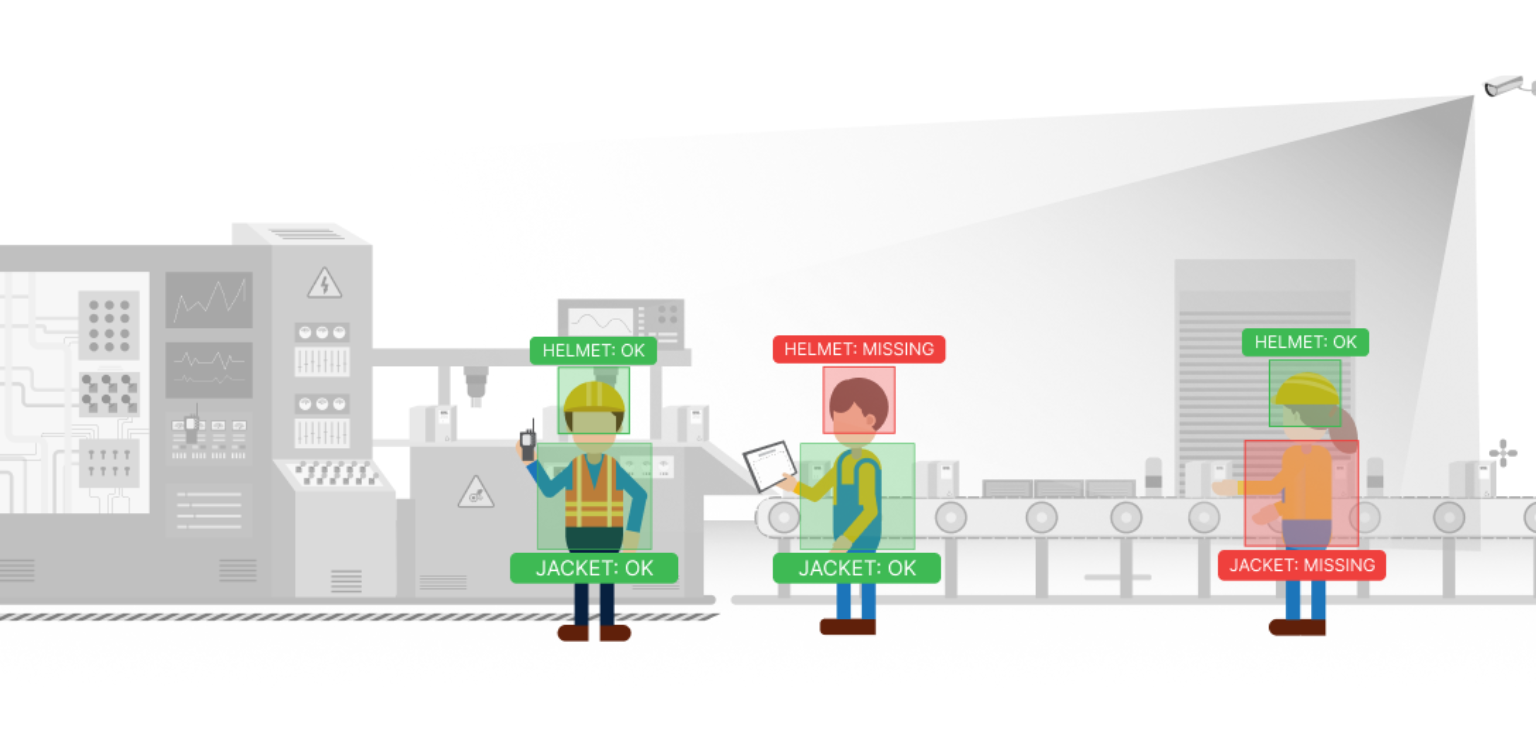

2. Safety as a Constant, Not a Checklist

In the US, liability and OSHA compliance are massive concerns. Gotilo Inspect includes robust PPE Detection and Zone Monitoring. It instantly flags if a worker enters a hazardous area without a hard hat or vest. It turns safety from a reactive policy into a proactive, always-on shield.

3. Data Privacy & Edge Execution

US clients are rightly protective of their data. Because Gotilo Inspect is optimized for Edge AI (running locally on your hardware), your proprietary production data doesn’t need to leave the building. It’s fast, secure, and bandwidth-efficient.

We aren’t just selling solution; we are selling the peace of mind that comes with knowing your facility sees everything, every time.

When Vision Met the World, Twice in Japan

When Vision Met the World, Twice in Japan

Inside the Gotilo-verse

Inside the Gotilo-verse